What Is Annotation Governance — and Why Most ML Teams Skip It

Machine learning systems rarely collapse overnight. They degrade gradually — and almost always silently.

Models that perform at 90%+ accuracy in staging environments can lose 15–25% of that performance within months of production deployment. The cause is rarely the model architecture. It is almost always the data layer — specifically, the accumulated drift in how training data was labeled.

Annotation governance is the discipline that prevents that drift from compounding into production failures.

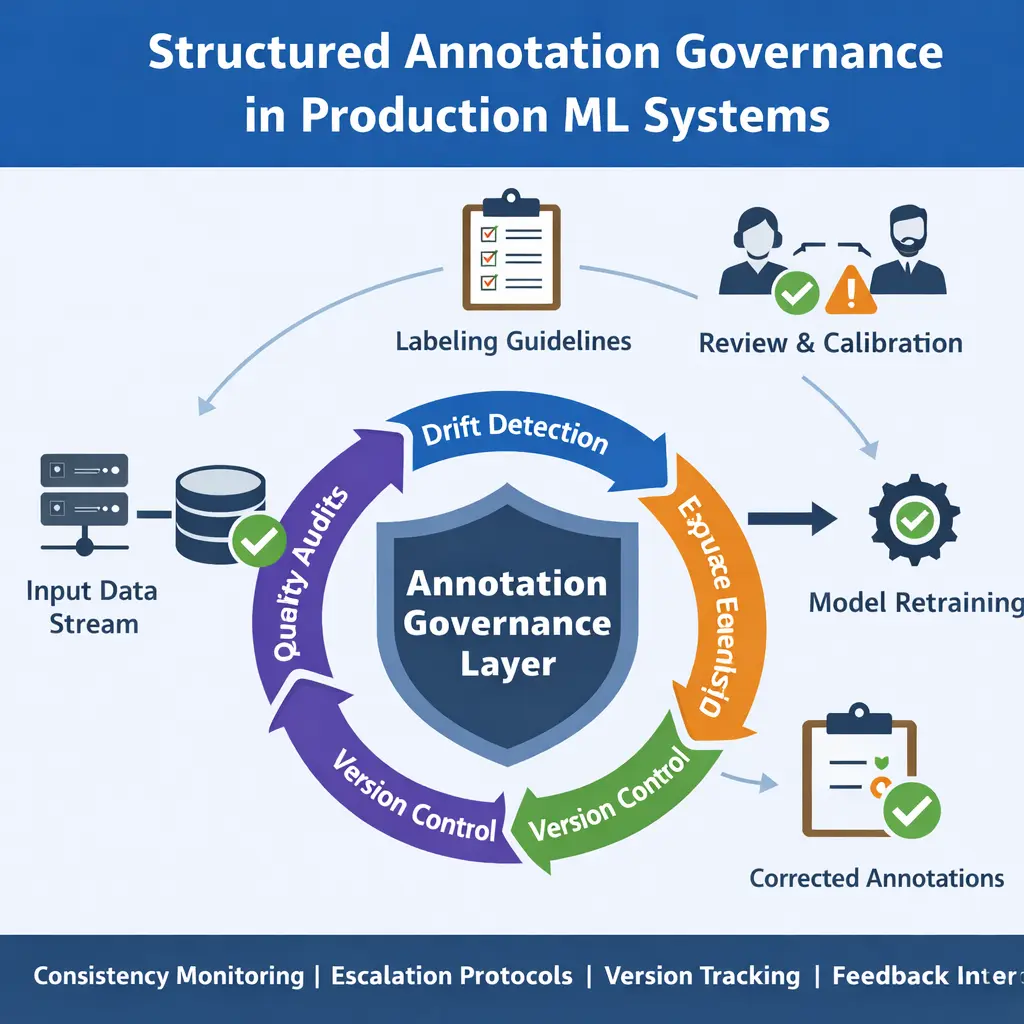

It refers to the structured processes, policies, and validation mechanisms that ensure labeling consistency, quality control, and version traceability across the full lifecycle of a machine learning system — from initial dataset creation through continuous production retraining cycles.

Annotation governance is not a one-time QA check. It is an ongoing operational system that treats the data labeling layer with the same engineering rigour as the model training pipeline. Without it, annotation quality decays at a rate that compounds with every retraining cycle.

Governance includes:

- Version-controlled labeling policy documentation with formal change management

- Periodic reviewer calibration sessions to maintain consistent interpretation

- Statistical inter-annotator agreement (IAA) tracking across all labeling batches

- Structured escalation and arbitration workflows for edge cases

- Continuous drift monitoring comparing historical and current label distributions

- Controlled retraining triggers — only after governance review, never reactively

Without these controls, annotation becomes operationally reactive rather than systematically governed — and production models degrade accordingly.

Why Annotation Drift Happens — The Five Root Causes

Annotation drift is not a single event. It is a gradual process driven by five compounding mechanisms, each of which is preventable with proper governance.

1. Reviewer Interpretation Variability

When labeling guidelines contain ambiguous boundary conditions, different reviewers apply different interpretations — especially for edge cases at the margin of class definitions. Even minor subjective differences compound at scale. In a dataset of 1 million labeled examples, a 2% interpretation divergence introduces 20,000 contradictory training signals.

2. Expanding Edge Case Exposure

As production data diversifies over time, new patterns emerge that challenge the original labeling assumptions. Categories that were clearly distinguishable at project launch become ambiguous in real-world deployment. Without formal processes to update guidelines when new edge cases emerge, reviewers handle them inconsistently.

3. Guideline Evolution Without Version Control

Teams frequently update labeling rules informally — via Slack messages, verbal instructions, or undocumented decisions. When these changes are not version-controlled and timestamped, datasets labeled before and after the change are incompatible but indistinguishable. The model trains on contradictory labels that appear identical in the dataset.

4. Inconsistent Escalation Policies

Ambiguous cases handled differently across reviewers create systematic inconsistency in exactly the training examples that matter most — the edge cases where model decisions are least certain and most consequential.

5. Feedback Loop Instability

When model predictions inform future labeling decisions — a common pattern in Human-in-the-Loop annotation workflows — incorrect predictions can bias reviewer judgment. Reviewers anchor on model outputs rather than applying independent judgment, creating a feedback loop where model errors compound into future training data.

Annotation drift rarely announces itself in aggregate accuracy metrics. It appears first in segment-specific performance, in low-confidence prediction zones, and in review dispute rates — signals that most teams do not monitor systematically until aggregate performance has already declined significantly.

What We Observed Across 500K+ Annotated Samples

The following benchmarks come from internal quality audits conducted across annotation projects delivered by Precise BPO Solution over 10+ years in data operations, established 2008. These figures represent aggregate observations across computer vision, NLP, and medical imaging datasets — anonymised and aggregated to protect client confidentiality.

Pre- vs Post-Governance IAA and Accuracy Data

Across a sample of 500,000+ labeled images audited under our internal quality framework, we measured the following differences between projects with structured governance and those without it at project start:

Source: Precise BPO Solution internal annotation quality audit, aggregated across 500K+ labeled samples, 2023–2025. Data anonymised. Individual project results vary based on task complexity, domain, and dataset characteristics.

The most significant finding was the relationship between governance timing and rework cost. Projects where governance frameworks were applied at intake — before labeling began — required on average 74% less rework than projects where governance was introduced after labeling errors had already been identified in model evaluation.

This aligns with the cost compounding principle: a labeling error caught during annotation costs roughly 1× to fix. The same error caught during model evaluation costs 10–50×. Found in live production, the cost is orders of magnitude higher.

Governance Impact by Annotation Type (2023–2025)

Across our delivery portfolio, governance impact varied by task complexity. The highest inconsistency rates before governance were observed in semantic segmentation and medical imaging tasks — precisely the domains where boundary ambiguity is highest and edge cases most consequential.

Source: Precise BPO Solution internal project quality database, aggregated across 40+ annotation projects, 2023–2025. Precise BPO Solution — 10+ years in data operations, established 2008. Data anonymised. Individual project results vary.

What Independent Research Says About Annotation Quality Failures

Our operational findings are consistent with — and in several cases more severe than — benchmarks reported in peer-reviewed research and industry studies. Three external sources are particularly relevant for teams evaluating the cost of annotation governance failures.

1. The Cost of Label Noise in Deep Learning (NeurIPS Research)

Research published at NeurIPS and replicated in multiple subsequent studies has shown that even modest levels of label noise — in the range of 10–20% — can reduce deep learning model accuracy by 15–30% on complex classification tasks, with the effect compounding non-linearly as noise increases. The research found that models trained on noisy labels exhibit lower generalisation than their validation-set performance suggests, meaning the gap between staging and production performance is structurally larger in datasets with annotation inconsistency.

External source: Jiang et al. (2018), "MentorNet: Learning Data-Driven Curriculum for Very Deep Neural Networks on Corrupted Labels" — NeurIPS 2018. This research established the foundational empirical relationship between label noise rate and downstream accuracy degradation that subsequent annotation quality research has built upon.

Our operational finding that 18.3% pre-governance inconsistency consistently produced 8–15% downstream accuracy degradation maps directly onto the NeurIPS research curve. The relationship is not anecdotal — it reflects a well-documented structural property of how neural networks respond to contradictory training signals.

2. Data Quality in Machine Learning Pipelines (Google AI Research)

Google's AI research team has published extensively on the concept of "data cascades" — how upstream data quality failures compound through ML pipelines to produce disproportionate downstream harm. Their 2021 study of AI practitioners across 53 organisations found that data issues were reported as the primary cause of production ML failures in the majority of cases, yet most organisations had no formal data quality governance processes in place.

The study specifically identified annotation inconsistency and evolving labeling standards as among the most common and least-monitored sources of data quality decay in production systems — exactly the failure mode our governance framework is designed to prevent.

External source: Sambasivan et al. (2021), "'Everyone wants to do the model work, not the data work': Data Cascades in High-Stakes AI" — ACM CHI 2021. This is one of the most-cited empirical studies on production ML data quality failures.

3. Inter-Annotator Agreement as a Quality Predictor (Computational Linguistics Research)

Substantial research in computational linguistics has established that Cohen's kappa and Fleiss' kappa are reliable predictors of downstream model performance — not just measures of labeler agreement. Studies have demonstrated that datasets with kappa below 0.80 produce statistically inferior model generalisation compared to datasets with kappa above 0.85, even when raw accuracy metrics appear similar at training time.

This underpins the specific thresholds we use in our governance framework: κ ≥ 0.85 as the operational target, and κ < 0.80 as the alert threshold requiring immediate calibration intervention.

External source: Artstein & Poesio (2008), "Inter-Coder Agreement for Computational Linguistics" — Computational Linguistics, MIT Press. The foundational reference on IAA measurement in annotation contexts, widely cited in annotation quality literature.

The convergence between independent academic research and our operational data across 500K+ annotations gives us confidence that the governance thresholds and intervention triggers described in this article are generalisable — not specific to Precise BPO's project mix or client base. Teams operating without annotation governance are facing the same failure modes documented in peer-reviewed literature.

The Retraining Trap: Why More Training Cycles Don't Fix Drift

When production model performance declines, the default response for most ML teams is to retrain on fresh data. This feels logical — and in some cases it is the right intervention. But when annotation drift is the underlying problem, retraining without governance stabilisation actively makes the situation worse.

The mechanism is straightforward:

- If label definitions have shifted informally, new training data carries the same inconsistencies as old data — just more recent ones

- If reviewer consensus has weakened, freshly labeled batches introduce additional contradictory signals

- If edge cases are inconsistently handled, each retraining cycle trains the model on a new variant of the confusion

Retraining on unstable labels compounds variance rather than reducing it. Each cycle learns from a slightly different interpretation of the same categories, producing models with unstable decision boundaries that deteriorate faster with each subsequent retraining. Governance must stabilise the data layer before retraining is triggered — not after.

The correct intervention sequence is: detect drift → audit label consistency → stabilise guidelines → calibrate reviewers → retrain. Not: detect performance drop → retrain immediately.

Teams that retrain reactively without governance review typically find that each successive model version shows shorter periods of stable performance — a pattern that accelerates until the root cause in the annotation layer is addressed.

The Six-Layer Annotation Governance Framework

A mature annotation governance framework is not a single process. It is a layered system of interlocking controls, each addressing a different mechanism of quality decay. The following six layers represent the standard framework applied across enterprise annotation projects at Precise BPO Solution.

| # | Layer | What It Controls | How to Implement |

|---|---|---|---|

| 1 | Labeling Policy Documentation | Interpretation consistency; boundary condition handling | Version-controlled docs with inclusion/exclusion rules, domain exceptions, and ambiguous case definitions. Every update timestamped. |

| 2 | Reviewer Calibration Sessions | Inter-annotator agreement; drift from baseline | Periodic alignment exercises on gold-standard samples. Frequency: at project start, every major retraining cycle, and whenever IAA drops ≥3%. |

| 3 | IAA Tracking | Statistical consistency monitoring | Cohen's kappa or Fleiss' kappa on 5–10% of samples per batch. Target: κ ≥ 0.85. Alert threshold: κ < 0.80. |

| 4 | Escalation & Arbitration Workflow | Edge case consistency; disputed label resolution | Tiered review queue: primary annotator → senior reviewer → domain expert. All escalation decisions logged and fed back to policy documentation. |

| 5 | Drift Monitoring | Label distribution shifts; class boundary migration | Continuous comparison of historical vs. current label distributions. Statistical alerts for distribution shifts exceeding threshold. |

| 6 | Label Version Control | Dataset reproducibility; root-cause traceability | Every major guideline update tracked and timestamped. Datasets linked to the guideline version active at labeling time. Enables rollback and audit. |

IAA tracking (Layer 3) is the earliest and most actionable signal of governance breakdown. In our operational data, a drop in Cohen's kappa from 0.90 to 0.82 consistently predicted a 10–14% downstream model accuracy decline before that decline appeared in aggregate evaluation metrics. IAA is your leading indicator.

The Governance-Integrated ML Production Cycle

The following workflow integrates annotation governance at every stage of the production ML cycle — not just during initial labeling. This is the pattern applied across computer vision projects including automotive annotation, medical AI annotation, and retail AI annotation at enterprise scale.

Model deployed to production — monitoring activated

Real-time monitoring tracks prediction confidence distributions, low-confidence output rates, and segment-specific accuracy by class. Governance dashboards activated from day one of deployment.

Anomalous predictions flagged and routed

Low-confidence predictions, high-uncertainty outputs, and segment-specific accuracy drops automatically route flagged samples to a structured human review queue. Threshold for flagging set per project based on historical baseline.

Secondary reviewer validates or corrects labels

Flagged samples reviewed by a senior annotator against the current governance-controlled labeling guidelines. Corrections logged with reviewer ID, timestamp, and policy version active at review time.

IAA audit triggered on correction batch

Each batch of corrections undergoes inter-annotator agreement measurement before being added to the training pool. Batches below the kappa threshold are held for arbitration and policy review.

Policy updated if systematic edge cases identified

Correction patterns are aggregated to identify systematic edge cases not covered by current guidelines. Policy documentation updated with formal versioning. All team members calibrated to updated guidelines before labeling resumes.

Retraining triggered only after governance sign-off

Model retraining is not triggered reactively. It requires: governance review sign-off, IAA confirmation ≥0.85, and policy version confirmation that all correction-batch data is consistent with current guidelines.

Post-retraining audit — cycle resets

Post-deployment performance is benchmarked against pre-retraining baseline. Drift monitoring reactivated. Governance cycle continues as a permanent operational layer, not a one-time fix.

Warning Signs of Annotation Governance Breakdown

These signals typically appear before aggregate accuracy metrics decline — giving teams an intervention window if they are monitored systematically. Most teams do not monitor them, which is why governance failures are usually discovered late.

IAA score declining

Cohen's kappa dropping below 0.82 from a project baseline above 0.88 is the earliest quantitative signal of reviewer drift. Act before it crosses 0.80.

Review disputes increasing

Rising volume of escalated or disputed labels indicates that labeling guidelines are failing to cover emerging edge cases or that reviewers are interpreting boundaries differently.

Edge case volume growing

When the proportion of samples flagged as "edge cases" grows beyond 8–10% of batch volume, it typically indicates that the data distribution has shifted beyond what current guidelines cover.

Retraining frequency increasing without improvement

If each retraining cycle produces shorter periods of stable accuracy, the training data — not the model — is the source of instability. This is the clearest signal that governance intervention is required.

Segment-specific accuracy drop

When performance degrades in specific subclasses or edge-case categories while aggregate accuracy stays flat, annotation inconsistency in those specific categories is almost always the cause.

Informal guideline changes

Any labeling rule communicated verbally or via chat without formal documentation and version control is a governance failure in progress. The impact may not be visible for weeks or months.

HITL as a Governance Control Layer — Not Just a Labeling Method

Human-in-the-Loop (HITL) is frequently described as a method for improving labeling efficiency through model-assisted annotation. In annotation governance, it plays a more important role: it is a real-time quality control mechanism that prevents model errors from compounding into the training data layer.

The governance-aware HITL pattern operates as follows:

- Model predictions on production data are monitored continuously for confidence and distribution drift

- Low-confidence or anomalous predictions are routed to human review before they can influence future training batches

- Human reviewers apply governance-controlled guidelines — not model output anchoring — ensuring independent validation

- Correction patterns are aggregated and fed back to the policy documentation cycle, closing the governance loop

This pattern is critical in domains where model feedback loops are most dangerous — medical AI annotation, autonomous vehicle datasets, and any application where model errors in production have material consequences.

HITL without governance is not a control layer — it is a reactive correction mechanism. The difference is whether human review is governed by version-controlled, consistently-applied labeling policies, or by ad hoc judgment. The former stabilises the training data layer; the latter introduces another source of inconsistency into it.

Our structured annotation governance workflows integrate HITL as a controlled, policy-governed layer — ensuring that human corrections improve data quality systematically rather than introducing new variability. See also: bounding box annotation quality standards, semantic segmentation QA protocols, polygon annotation consistency frameworks, text annotation governance, and landmark annotation QA.

Governance-integrated HITL is especially critical in high-stakes annotation domains. For agriculture AI annotation, label inconsistency in crop disease classification can propagate silently across entire training datasets. For sports action recognition, edge case inconsistency in motion boundary labeling directly degrades real-time inference accuracy. For content moderation annotation, reviewer drift in boundary cases creates regulatory and compliance exposure that aggregate accuracy metrics do not capture. In all these domains, HITL without governance is the same as QA without standards.

For 3D annotation tasks — including cuboid annotation for autonomous systems and polyline annotation for lane detection — governance complexity is higher still, since boundary ambiguity in 3D space is structurally harder to standardise than 2D annotation. These are precisely the domains where formal IAA tracking and version-controlled guidelines deliver the largest quality improvements.

Is Annotation Drift Silently Degrading Your Models?

Our governance audit examines your labeling pipeline for IAA consistency, policy version control, and drift signals — before they show up in production accuracy metrics.

Request a Free Governance Audit → View Annotation Services