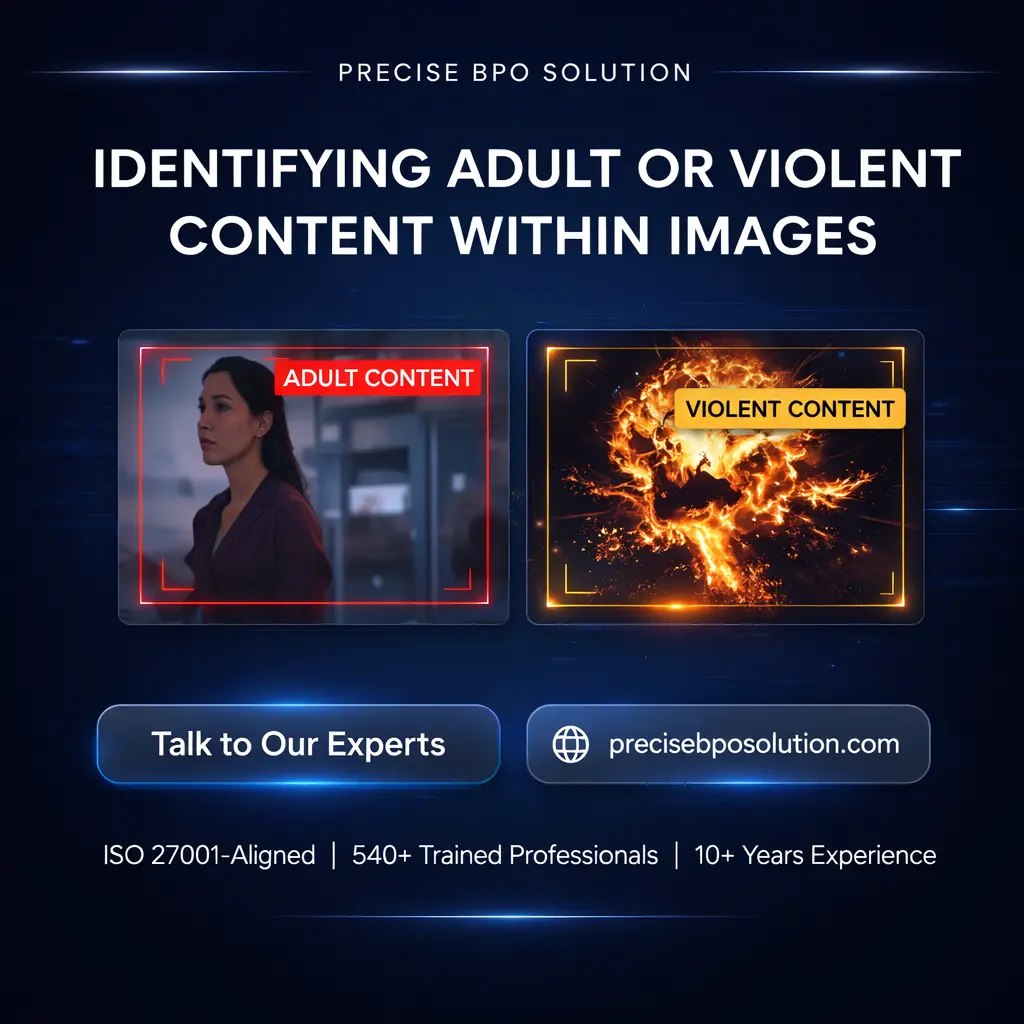

At Precise BPO Solution, we provide explicit data annotation services for organizations that require accurate, human-reviewed labeling of sensitive content. Our trained annotators manually examine images, videos, and text containing explicit, violent, or restricted material and classify them according to clearly defined guidelines. This service supports content moderation programs by ensuring consistent, policy-aligned labeling of sensitive data.

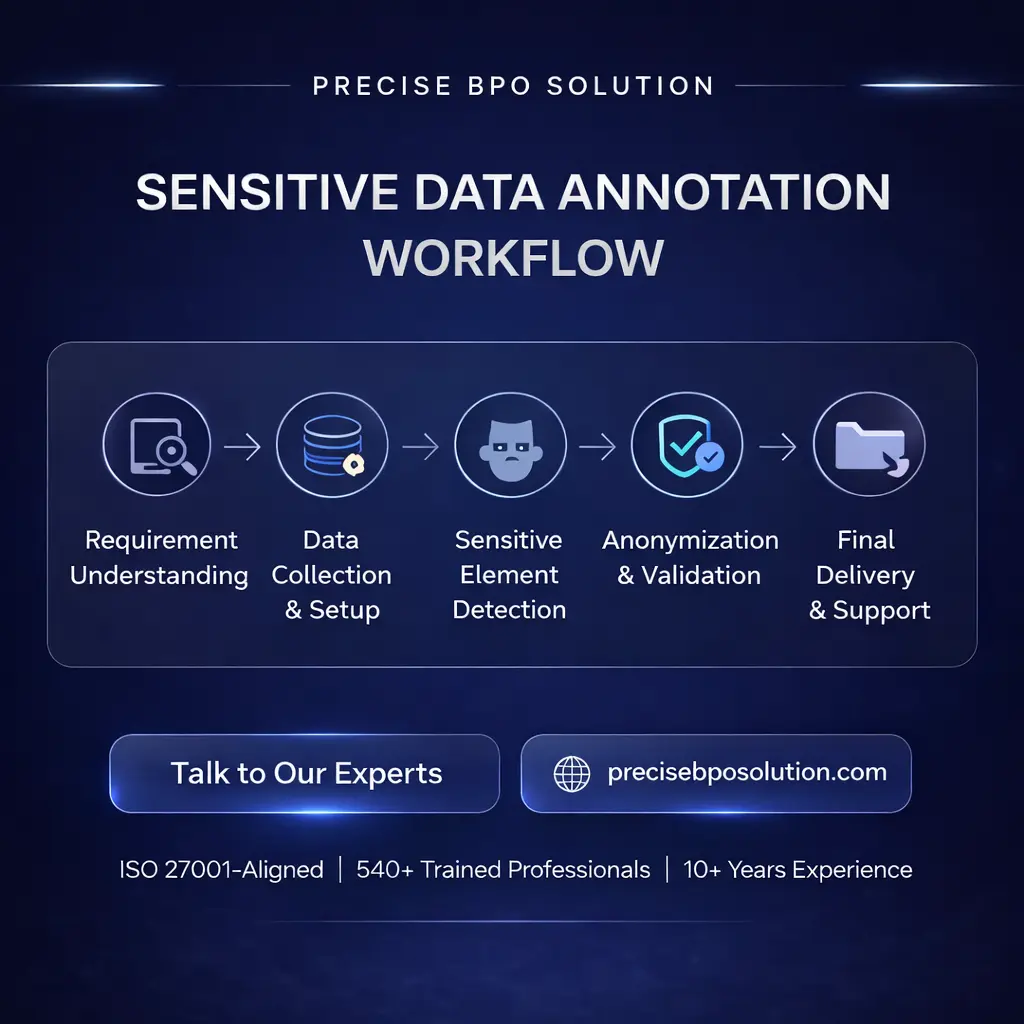

Our explicit content annotation process follows structured, multi-stage review procedures supported by experienced professionals and documented standards. Each dataset is reviewed to maintain contextual accuracy, consistency, and responsible handling of sensitive material, with emphasis on human judgment and interpretation rather than automated decision-making.

With 540+ trained annotators and over 10 years of experience, we have labeled more than 810 million images and related data assets across diverse data labeling programs. All work is delivered by our India-based teams operating under ISO 27001, HIPAA- and GDPR-aligned processes, ensuring secure handling, confidentiality, and controlled access throughout execution.

We support organizations across the US, UK, Europe, Middle East, LATAM, APAC, and global markets, helping manage large-scale annotation requirements with consistency and reliability. Our services include image classification, text annotation, NSFW dataset labeling, content flagging, and multi-category moderation tasks, executed through controlled workflows designed to preserve data integrity, labeling consistency, and audit readiness.